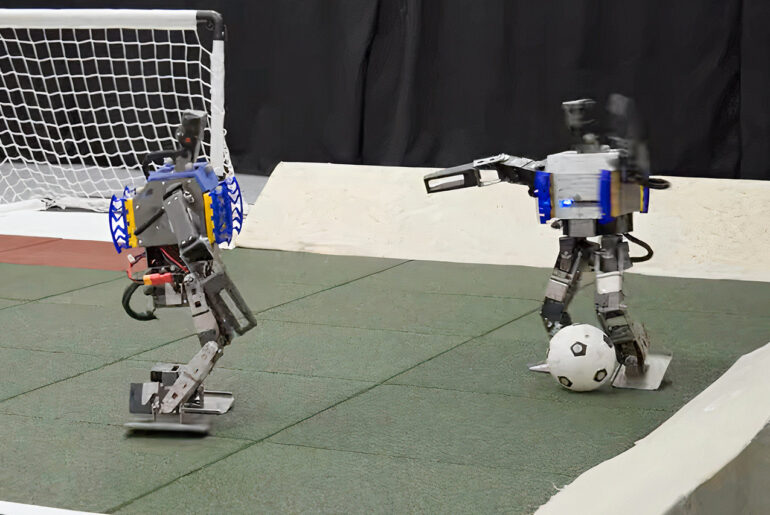

Google’s DeepMind team recently showcased a pair of AI-powered mini humanoid robots playing a game of soccer. These Robotis OP3 robots stand just 19 in (50 cm) tall, have 20 joints, and required just 240 hours of deep reinforcement learning to perform what you’re about to see.

This deep reinforcement learning technique combines supervised learning with neural networks and consisted of two stages. The first one involves two skill policies – getting up and scoring against an untrained opponent – while the second is full one-versus-one soccer. Once the simulation was complete, the agents were then transferred to the OP3 robots.

- Google Tensor G2 Processor: Faster and more efficient than previous Pixel models

- 5G Connectivity: Allows you to take advantage of faster data speeds on major carriers

- Adaptive Battery: Can last up to 24 hours on a single charge with extreme battery saver mode

In the [test] matches, the trained robots walked 181 percent faster, turned 302 percent faster, kicked the ball 34 percent faster, and took 63 percent less time to get up from a fall than robot agents working off a scripted baseline of skills,” said the researchers.

[Source]